TL;DR

As AI-generated prototypes compress the gap between idea and artifact, the designer's role shifts from producing high-fidelity outputs to ensuring high-fidelity thinking. The teams that move fastest without that discipline will build the wrong thing, quickly and convincingly.

The Framework That No Longer Fits

In The Staff Designer, Sherrod Sipp makes a sharp observation about design fidelity: when a mockup looks too polished too early, stakeholders stop evaluating the concept and start nitpicking the pixels. The solution, he argues, is deliberate roughness, sketches, wireframes, lo-fi themes, to keep conversations anchored in strategy rather than surface.

It's a smart framework. But it was built for a world where designers controlled the means of production. That world is long gone.

Today, a product manager can go from an AI-transcribed customer meeting to an AI-generated PRD to a working prototype in Lovable or Claude Code all in a single afternoon, before a designer has even been briefed. The old fidelity ladder assumed only designers could make things feel real. That gatekeeping function is no longer a requirement that constrains PMs and engineers. So what does fidelity mean now? And where does design thinking fit in a workflow that can skip straight to a functional prototype?

From High-Fidelity to High-Tangibility

The traditional fidelity problem was that things look too polished too early. The new problem is that things feel too real too early.

A vibe-coded prototype isn't just high-fidelity in the traditional sense. It has real interactions, real layout, sometimes real data. It's high-tangibility, and tangibility is far more persuasive than a static mockup ever was. Once a stakeholder or customer clicks through a working prototype, it becomes the mental anchor for what the product is. Every subsequent design exploration gets measured against that first tangible thing.

Sipp warns that polished mockups shift conversations from "is this the right idea?" to visual nitpicking. But a functional prototype shifts the conversation even further: "Why would we change what already works?" That's a much harder gravitational pull to escape.

The AI Telephone Problem

Consider the chain that produces a modern vibe-coded prototype: a customer meeting becomes an AI transcript, then an AI-summarized set of notes, which feeds into an AI-generated PRD, which becomes a prompt, which produces a working prototype. Each step compresses nuance. The customer's hesitation, the context behind their feature request, the thing they didn't say, all of it gets flattened into a confident-sounding document that feeds the next confident-sounding document.

By the time you're looking at a clickable prototype, several layers of AI telephone have already occurred. The prototype looks decisive. The thinking behind it may be paper-thin.

This is where a designer's value actually lives now. Not in producing artifacts, anyone can produce artifacts, but in decompressing the chain. What assumptions got baked into the PRD that nobody examined? What did the AI summary inherit from the biases in the transcript? What did the customer actually mean?

What Real Validation Actually Requires

This is where the conversation usually gets flattened. "Validation" in most product organizations means a PM schedules a few customer calls, shares a prototype, and collects reactions. That's not validation, that's confirmation bias with a calendar invite.

Real UX validation is a research discipline with distinct stages, each designed to surface a different kind of truth. The uncomfortable fact is that most teams skip the hard parts. AI doesn't fix that tendency. But it does, for the first time, remove the time cost that was often used to justify skipping them.

Problem framing. Before any artifact exists, someone needs to define what question is actually being asked. Not "does this feature work?" but "are we solving a real problem for real people, and do we understand it well enough to know what solving it looks like?" This is the stage most likely to be abandoned when a working prototype already exists. The prototype answers the wrong question before the right question has been posed.

Generative research. This is exploratory, open-ended inquiry, interviews, contextual observation, diary studies, designed to surface what customers actually experience, not validate what a team already believes. AI can accelerate the mechanical parts here substantially: transcription, thematic coding, synthesis across sessions. What used to take a researcher two weeks to analyze can now take two hours. But the quality of the output depends entirely on the quality of the questions asked in the field. AI can synthesize patterns; it cannot replace the researcher who notices the thing a participant didn't say.

Synthesis and insight generation. Turning raw research into actionable insight has always been where the real thinking happens, and where it most often gets compressed or skipped. Affinity mapping, journey mapping, opportunity framing. AI tools are now genuinely useful here: feeding transcripts into a model and asking it to identify recurring tensions, unmet needs, or moments of friction produces a useful first pass. The designer's job is to interrogate that output, not accept it. The synthesis is only as good as the judgment applied to it.

Concept testing. Most teams think validation starts when you put a prototype in front of a customer and watch what happens. The prototype isn't the problem. The questions you're asking when you show it are.

"Does this work?" and "Does this matter?" are not the same question, and they produce fundamentally different sessions. The first treats the prototype as a near-final artifact to be evaluated. The second treats it as a stimulus, a tangible way to test whether the team has understood the problem correctly before encoding any real decisions. Same prototype. Completely different posture.

The mistake isn't building a prototype early. It's walking into a customer session with the prototype framed as a solution rather than a hypothesis. When customers sense they're evaluating finished work, they respond to the execution. When they sense they're being asked whether the underlying idea is worth pursuing, they tell you something more valuable: whether you've understood their problem at all. The prototype should provoke the question, not answer it.

Co-design. This is the stage AI has made genuinely transformative in a way that wasn't previously possible. Co-design, bringing customers into the design process as active participants rather than passive evaluators, used to require physical workshops, printed artifacts, and facilitated sessions. The logistics alone made it rare. Now, a designer can sit with a customer on a video call, describe a problem space, build a rough prototype in real time, hand it to the customer to react, modify it based on what they say, and repeat, all within a single session. The customer stops being a subject and becomes a collaborator. That shift in dynamic produces qualitatively different insight. People tell you different things when they're building alongside you than when they're reviewing your work.

Iterative testing and refinement. Traditional usability testing, task-based evaluation of a prototype against defined success criteria, has always been valuable and chronically underused because of the time required to set up, recruit for, and analyze. AI substantially reduces that overhead. Recruiting assistants, automated note-taking, AI-generated analysis of session recordings, none of this replaces the trained eye of a researcher watching a participant struggle with a flow, but it removes the administrative burden that caused teams to test once instead of three times.

The through-line across all of these stages is the same: AI compresses the time cost of the mechanical work, but it cannot supply the judgment, the curiosity, or the discipline to run the process at all. A team that was skipping research before will continue to skip it, just faster. A team that understood its value will now be able to do more of it, more often, with more participants, at higher fidelity. That gap, between teams that treat validation as a checkbox and teams that treat it as a genuine discovery process, is about to get significantly wider.

Rethinking Fidelity: A Two-Axis Framework

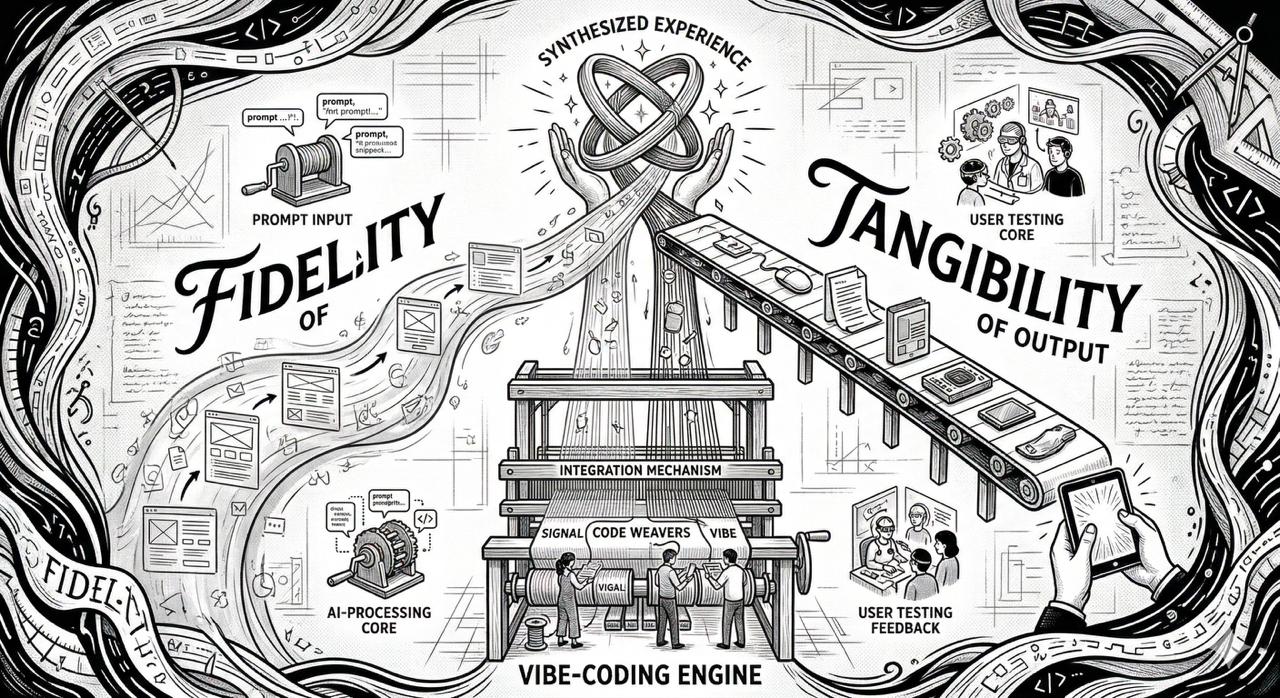

Rather than mapping fidelity on a single scale of visual polish, we need two axes: fidelity of understanding versus tangibility of the output. The validation track above is what "fidelity of understanding" actually means in practice, it's not a vague quality, it's a specific set of activities with a specific sequence.

Low Understanding, High Tangibility, The Danger Zone

This is the vibe-coded prototype built on untested assumptions. It looks and feels real, but nobody has validated the core premise. It's the modern version of Sipp's fidelity mismatch, except far more seductive because you can interact with it. Teams rally around it. Customers react to it. And the illusion of progress makes it politically difficult to question.

High Understanding, Low Tangibility, The Undervalued Zone

This is where research, journey mapping, and problem framing live. Less impressive in a stakeholder demo, but this is where actual strategic value gets created. A well-defined problem space, a validated set of user needs, a clear understanding of what not to build, these are the foundations that make everything downstream better. The challenge is that in a world of instant prototypes, this work feels slow and invisible. It shouldn't. The research track described above produces exactly this kind of understanding, and AI has made it faster than it has ever been.

High Understanding, High Tangibility, The Goal

This is a prototype informed by real insight, tested against real scenarios, and built with intentionality. This is where a designer working with AI tools becomes genuinely fast and powerful. The speed of modern tooling doesn't just accelerate execution, it can accelerate exploration. Three different approaches to the same problem, each emphasizing different user needs, built and tested in a day. The co-design session that used to take a week to schedule, facilitate, and debrief can now happen tomorrow.

A Working Process for the New Reality

The question isn't how to insert design as a stage gate before the prototype gets built. The question is where design thinking plugs into an accelerated loop.

Ride the loop, don't fight it. If a PM is going to vibe-code a prototype from an AI-generated PRD, the designer should be shaping the inputs, not waiting for a handoff. Attend the customer meetings. Influence the prompts. Sipp's advice about bringing curiosity at project kickoff still applies, except now "kickoff" might be the moment someone opens Lovable. If you're not in the loop at that point, you're already reacting instead of leading.

Treat the prototype as a research artifact. A vibe-coded prototype is a conversation starter, not a design deliverable. Frame it explicitly with the team: "This is great for testing assumptions, let's figure out which assumptions are baked in." That single reframe shifts the prototype from "thing we're building" to "thing we're learning from." It preserves the PM's momentum while creating space for running the real validation track against it.

Use the same tools back. If a PM can go from PRD to prototype in an afternoon, so can a designer, but with the added layer of design thinking on top. Use the speed to explore, not just execute. Show three divergent approaches. Surface edge cases by building them. Stress-test the happy path with realistic data. Run a co-design session with a customer and iterate the prototype live. The tools are neutral; what differentiates the output is the quality of the questions you're asking while you use them.

Be the person who decompresses the chain. Every layer of AI processing compresses signal. Designers can be the people who expand it back out, returning to the original meeting recording, reading the raw transcript rather than the summary, talking to the customer again, cross-referencing the PRD's assumptions against what the research actually shows. This isn't slowing things down. It's preventing the team from building the wrong thing quickly.

Fidelity of Thinking Is the New Moat

Sipp's core insight still holds: the fidelity of the output should match the fidelity of the thinking. What's changed is that everyone can now produce high-fidelity output. PMs, engineers, founders, anyone with a prompt and a tool can generate something that looks and feels like a product.

What they cannot generate, without discipline and method, is the understanding that makes the output worth building. That understanding comes from asking the right questions before the prototype exists, from running research that is genuinely generative rather than confirmatory, from co-designing with customers instead of presenting to them, and from treating every prototype as a hypothesis rather than a deliverable.

Design's strategic value has never been more available to demonstrate. The gap between teams that think rigorously and teams that just ship is widening, and AI is the reason, it has made both paths faster. The designers who recognize that moment, and move accordingly, will be the ones who matter.

Written by a human. Edited with AI.